Provable Safe Reinforcement Learning with Binary Feedback, Bennett et. al. 2022

Setting

- RL problem

- Agent cannot take unsafe actions during training

- Safety function unknown, can use human feedback to query whether an action is safe

- Feedback happens offline between episodes

- Minimized feedback

Setup / Notation

Rewardless MDP: $M = (\mathcal{S}, \mathcal{A}, T, \mu, H)$

- $H \in \mathbb{N}$ is the horizon

Policy $\pi: \mathcal{S} \to \Delta(\mathcal{A})$, possibly policies $\Pi$

Suboptimality metric:

$$\texttt{SubOpt}(\hat{\pi};\Pi) := \sup_{\pi \in \Pi} V(\pi) - V(\hat{\pi})$$Generic RL algorithm: we have access to a blackbox RL algorithm $\texttt{Alg}$ for a set of reward-free MDPs $\mathcal{M}$ with $M \in \mathcal{M}$.

Given

- $R': \mathcal{S} \times \mathcal{A} \to [0, 1]$

- $\epsilon, \delta \in (0, 1)$

- $\texttt{Alg}(R', \Pi, \epsilon, \delta)$ returns a policy $\hat{\pi} \in \Pi$ such that $\texttt{SubOpt}(\hat{\pi}, \Pi) \leq \epsilon$ with probability $\geq 1-\delta$.

- Moreover, it is guaranteed to do so for $n_{\texttt{Alg}}(\epsilon, \delta)$ episodes for some polynomial $n_{\texttt{Alg}}$ – this is the sample complexity

- (I’m assuming we need the reward space to be $[0, 1]$ in order for such bounds to be possible)

Safety oracle: we have access to $f^* : \mathcal{S} \times \mathcal{A} \to \pm 1$, where $f^*(s, a)= 1$ if and only if $(s, a)$ is safe

We assume $f^*$ is a member of some hypothesis class $\mathcal{F}$ with finite VC dimension, and this class is known

We use this to define a set of safe policies

$$\Pi_{\text{safe}}(f) = \{\pi_{\text{safe}}\} \cup \{\pi \in \Pi:f(s, \pi(s)) = 1 \text{ a.s.} \ \forall s \in \mathcal{S}\}$$Goal: Find $\hat{\pi}$ with

$\texttt{SubOpt}(\hat{\pi}; \Pi) \leq \epsilon$ ($\hat{\pi}$ is approximately optimal)

$\hat{\pi} \in \Pi_{\text{safe}}$

The agent never takes unsafe actions during training

Algorithm

- For any safety-labelled dataset $\mathcal{D}$ of tuples $(s, a, f^*(s, a))$, let the version space $\mathcal{V}(\mathcal{D})$ be the subset of $\mathcal{F}$ consistent with the data.

- Moreover, for $a \in \mathcal{A}$ we define the disagreement region $RD^a(\mathcal{D})$ to be the set of states such that $(s, a)$ does not agree on all of $\mathcal{V}(D)$.

- Finally, let $\Pi(\mathcal{D})$ give the set of verifiably safe policies, i.e., $$\Pi(D) := \bigcap_{f \in \mathcal{V}(\mathcal{D})} \Pi_{\text{safe}}(f)$$

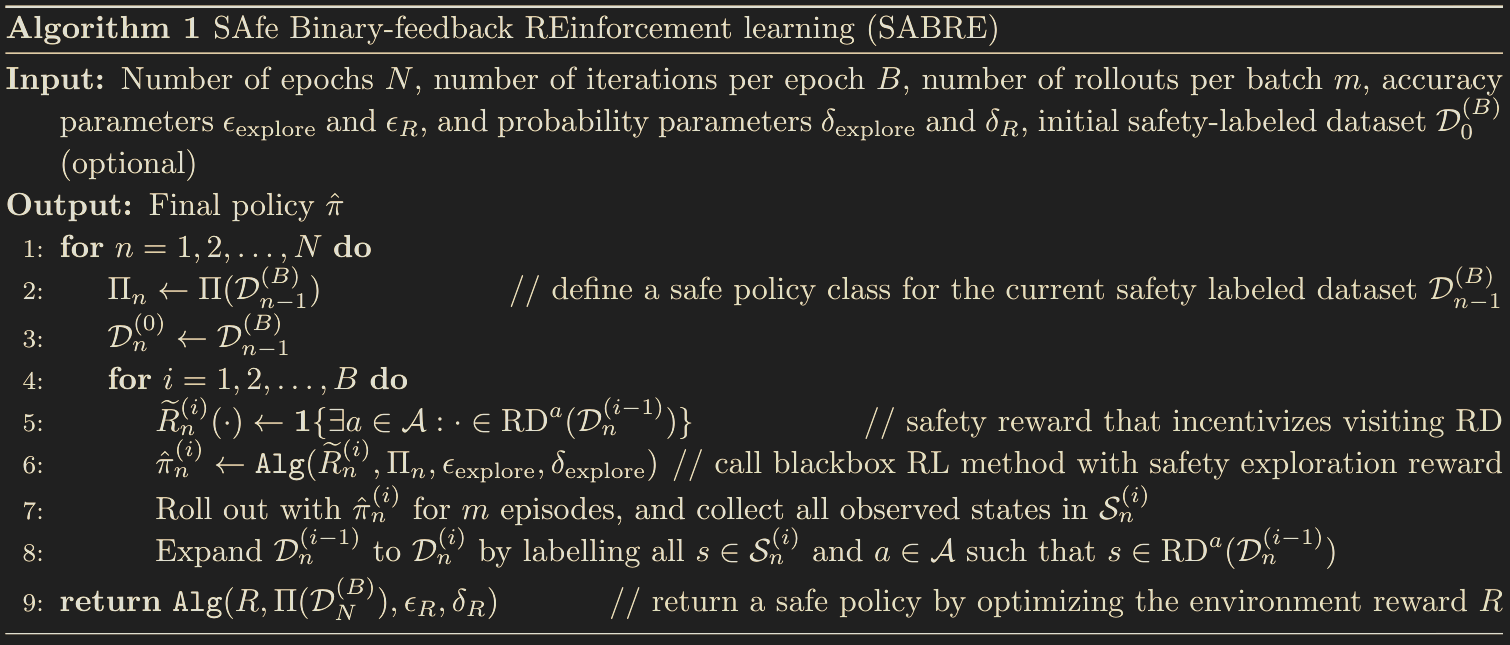

The the SAfe Binary-feedback REinforcement learning (SABRE) algorithm can be defined as follows:

- It takes in epochs $N$, batches $B$, rollouts $m$, accuracy parameters $\epsilon_{\text{explore}}$ and $\epsilon_{R}$ and probability parameters $\delta_{\text{explore}}$ and $\delta_R$

- The algorithm is pretty brute force. Basically for each epoch

- We define a reward function $R(s, a) = \mathbf{1}\{\exists a' \in \mathcal{A}:s \in RD^{a'}(\mathcal{D})\}$

- This incentivizes visiting states that have disagreement actions, but does not incentivize taking disagreement actions due to the requirements that our policy must be safe.

- Note that the original reward $R$ is not used here at all. We could be spending a ton of time on useless state / actions, and this seems like a major inefficiency of the algorithm.

- Then find $\pi$ with $\texttt{Alg}$ called on $R$ and $\Pi(\mathcal{D})$

- Finally, label all actions where there are disagreements

- We define a reward function $R(s, a) = \mathbf{1}\{\exists a' \in \mathcal{A}:s \in RD^{a'}(\mathcal{D})\}$

- After doing all of this, return a safe policy using the original reward

Theoretical Results

Policy-Cover Dimension: There exists some positive integer $d_{\Pi}$ such that, for any given set of policies $\Pi \subseteq \Pi_{\text{safe}}$, there exists a set of policies $\pi_1, \ldots, \pi_{d_\Pi}$ satisfying

$$ \frac{1}{H}\sum_{h=1}^H \mathbb{P}\left[s_h \in \tilde{\mathcal{S}}\right] \leq \sum_{i=1}^{d_\Pi} \left(\frac{1}{H}\sum_{h=1}^H \mathbb{P}_{\pi_i}(s_h \in \mathcal{\tilde{S}})\right) $$for all measurable $\mathcal{\tilde{S}} \subseteq \mathcal{S}$ and $\pi \in \Pi$. $d_\pi$ is the policy-cover dimension.

For finite-state environments, this can be rewritten in terms of occupancy measure as

$$\eta^{\pi}\cdot \mathbf{1}_{s} \leq (\eta^{\pi_1} + \ldots + \eta^{\pi_{d\Pi}}) \cdot \mathbf{1}_s$$for any $s \in \mathcal{S}$, where $\mathbf{1}_s$ gives $1$ for each state-action pair with $s$.

Disagreement Coefficient: For any $\pi \in \Pi$, $h \in [H]$, $r \geq 0$ denote

$$B_{h, \pi}(r) = \{f \in \mathcal{F}: \mathbb{P}_\pi[f(s_h) \neq f'(s_h)] \leq r\}$$and the disagreement coefficient

$$\theta_{h, \pi}(r_0) = \sup_{r > r_0}\frac{\mathbb{P}[\exists f, f' \in B_{h, \pi}(r):f'(s_h) \neq f(s_h)]}{r}$$Assumption: There exists some fixed $d_\theta < \infty$ such that $\theta_{h, \pi}(0) < \infty$ for all $h$ and $\pi$.

Theorem:

Let

- $d_{VC}$ denote the VC dimension of $\mathcal{F}$

- Let $\epsilon, \delta$ be given

- Then with carefully-tuned parameters (that I don’t want to write out), we have that with probability $\geq 1-\delta$

- The sample complexity is $$ \mathcal{O}\left(H^4\epsilon^{-1}\log(\delta^{-1})d_{\pi}d_\theta^2d_{\mathsf{VC}}\right) $$ $$ \mathcal{O}\left(A\log(\epsilon^{-1})\log(\delta^{-1})H^2d_{\Pi}d_{\theta}^2d_{\mathsf{VC}}\right) $$