Safe Exploration in Reinforcement Learning: A Generalized Formulation and Algorithms

Preliminaries

- Constrained Markov decision process: $\mathcal{M} = \langle \mathcal{S}, \mathcal{A}, H, \mathcal{P}, r, g, s_i\rangle$

- $g: \mathcal{S} \times \mathcal{A} \to [0, 1]$ is a cost function

- $s_1$ is an initial state

- Bellman Operator: $$\mathcal{T}_h(Q)(s, a) = \mathbb{E}[r(s, a) + \gamma V_Q(s_{h+1})]$$

Problem

GSE Problem:

$$\max_\pi V^{\pi} \text{ subject to }\mathbb{P}\left[g(s_t, a_t) \leq b_t\right] = 1 \ \text{ for each } t$$Assumptions:

- Safety Margin: There exists $\xi \in \mathbb{R}_{>0}$ such that $\mathbb{P}_{\pi^*}[g(s_h, a_h) \leq b_h - \xi] = 1$ for all $h$

- Emergency stop action: you can choose to emergency stop at each state, so every state has a safe action

- $\delta$-Uncertainty Quantifier: Let $\mu:\mathcal{S} \times \mathcal{A} \to \mathbb{R}$ denote the estimated mean function of safety. There exists a $\delta$-uncertainty quantifier $\Gamma: \mathcal{S}\times\mathcal{A} \to \mathbb{R}$ such that $$|g(s, a) - \mu(s, a)| \leq \Gamma(s, a)\ \forall s, a$$

with probability $\geq 1-\delta$

- This is encoding “we can estimate uncertainty”, and is perhaps a strong assumption

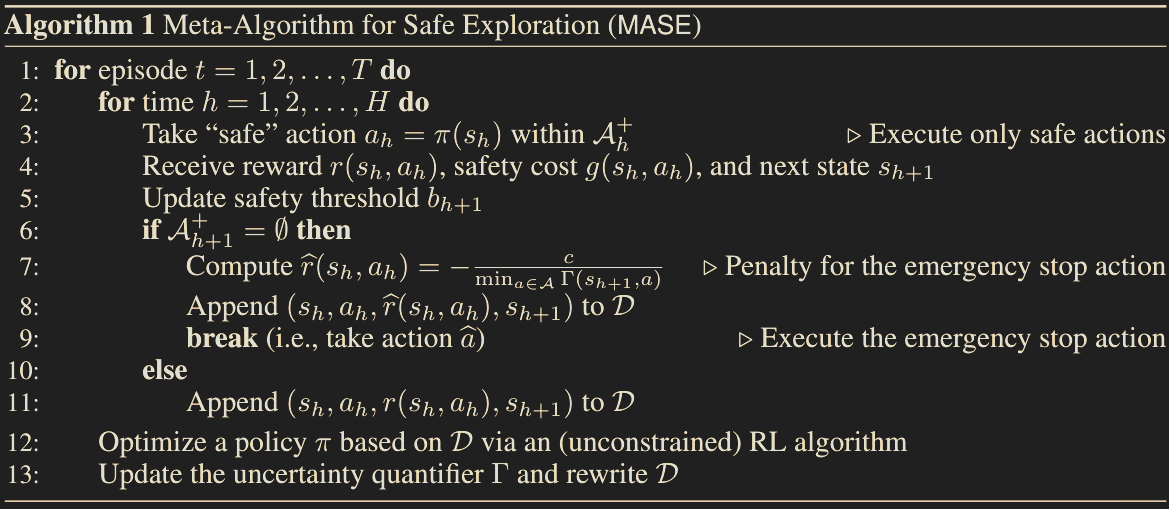

Method

- Safe action set: $$\mathcal{A}_h^+ = \{a \in \mathcal{A} \mid \min(1, \mu(s_h, a)\ + \Gamma(s_h, a) \leq b_h\}$$

- Rewriting constrained MDP as unconstrained:

- $\hat{\mathcal{M}} = \langle \mathcal{S}, \{\mathcal{A}, \hat{a}\}, H, \mathcal{P}, \hat{r}, \rho\rangle$

- We have $$ \hat{r}(s_h, a_h)= \begin{cases}

- \frac{c}{\min_{a \in \mathcal{A}}\Gamma(s_{h+1}, a)} & \text{if }\mathcal{A}_h^+ = \varnothing,\ r(s_h, a_h) & \text{otherwise} \end{cases} $$

Theorem: Guarrantees safety with probability $\geq 1- \delta$ Theorem: Assume $\Gamma(s, a) \leq \frac{\xi}{2}$ for $\xi$ defined in in the safety margin. Set $c > \frac{\xi V_{max}}{2\gamma^H}$. Then the optimal policy of $\hat{\mathcal{M}}$ is identical to that in $\mathcal{M}$.

GLM

GLM: Let $d \in \mathbb{Z}_{> 0}$ be a feature dimension and $\mathbb{B}^d = \{z \in \mathbb{R}^d \mid ||x||_2 \leq 1\}$ be a ball in $\mathbb{R}^d$.

Thoughts

- The $\Gamma(s, a) \leq \frac{\epsilon}{2}$ assumption is pretty impractical; this already assumes you have very low uncertainty